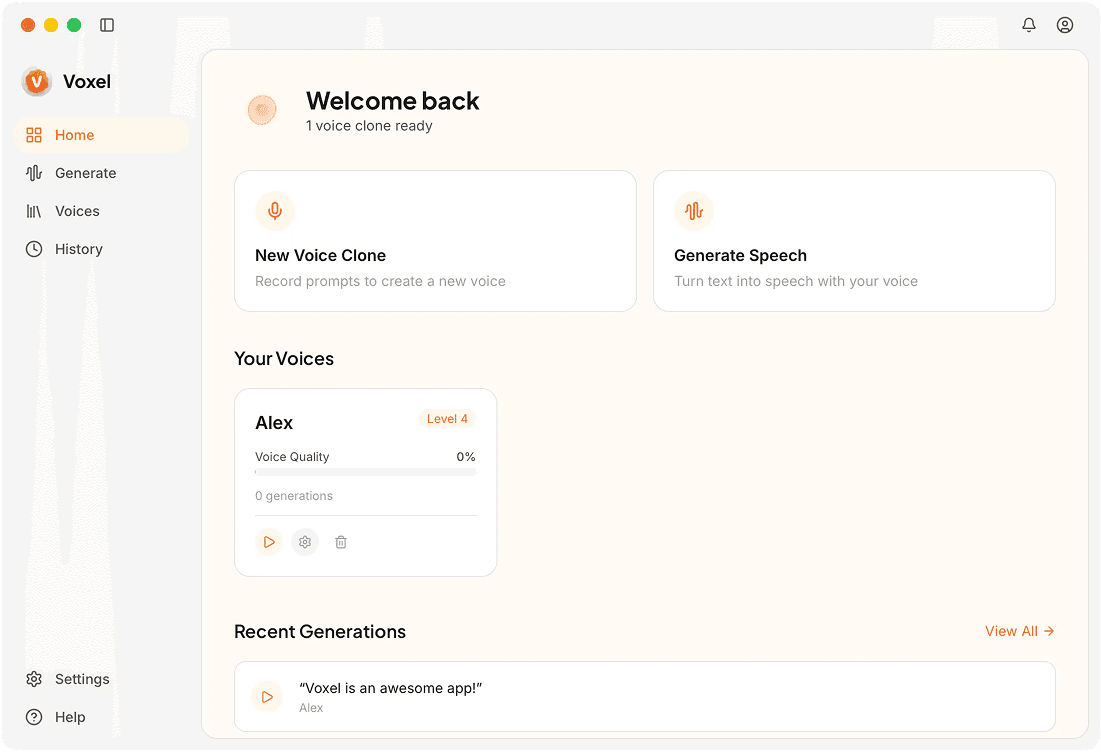

On Device Voice Cloning Powered by Your Mac's Own Hardware

Your Mac already has the hardware. Voxel leverages Apple Silicon's Neural Engine and GPU to train voice models and generate speech — fast, private, and fully self-contained.

The Technology Behind On-Device Cloning

Apple Neural Engine Integration

Key inference tasks are offloaded to the Neural Engine on M-series chips. This dedicated hardware accelerates voice generation while keeping energy consumption low.

GPU-Accelerated Training

Model training runs on the integrated GPU, which handles the parallel computations needed to learn a voice profile from your audio sample.

Efficient Memory Usage

Voice models are optimized to fit within unified memory on Apple Silicon. Even a Mac with 8 GB of RAM can train and run models without swapping.

Low Power Consumption

On-device processing on Apple Silicon is energy-efficient. You can generate hours of audio on a MacBook without significant battery drain.

Why On-Device Processing Changes the Workflow

No Waiting on Servers

Cloud services introduce round-trip latency and queue times during peak usage. On-device processing starts immediately and finishes based solely on your hardware speed.

Predictable Performance

Your generation speed doesn't fluctuate based on how many other users are online. The same input produces the same turnaround time every session.

Works in Restricted Environments

Studios, corporate offices, and government facilities often restrict cloud access. On-device cloning works within those constraints without any special configuration.

Frequently Asked Questions

Put Your Mac to Work

Voxel uses Apple Silicon to clone and generate voices without any cloud dependency.

Get Started Free